Teams often treat an MVP as “ship something small, then measure whatever is easy.” That pattern creates busy dashboards and weak decisions. The point of MVP metrics is not to prove activity. It is to reduce uncertainty fast, with numbers that map to real customer value and clear business outcomes.

A practical way to keep focus is to run metrics in two modes. Pre-MVP metrics validate the problem and the “first value” experience. Post-MVP metrics prove repeatable value delivery, retention, and monetization. The shift matters because the questions change from “Is this worth building?” to “Can this grow without burning cash?”

WHAT'S IN THE ARTICLE

What changes before and after an MVP

Before an MVP exists, your biggest risks tend to be: building the wrong thing, building for the wrong buyer, and choosing a workflow that never becomes a habit. After an MVP is live, the biggest risks shift toward churn, weak unit economics, and scaling a product that works only with high-touch support.

A clean way to think about this is: pre-MVP metrics are validation metrics, while post-MVP metrics are value and efficiency metrics. Both can sit inside AARRR (Acquisition, Activation, Retention, Referral, Revenue), but the weighting changes sharply over time.

Pre-MVP metrics: validate demand and your path

Pre-MVP is not “no data.” It is the phase where you decide what evidence is strong enough to invest engineering time. You can measure with landing pages, prototypes, concierge tests, paid pilots, and tightly scoped beta releases.

You get signal from a mix of qualitative and quantitative inputs, but the goal is the same: confirm that a defined user can reach a meaningful outcome quickly and wants to repeat it.

Common pre-MVP sources of signal include:

- Customer interviews

- Usability tests

- Landing page conversion

- Waitlist to signup conversion

- Prototype task completion

The pre-MVP scorecard that stays honest

Most early teams track too many top-of-funnel counts. A better scorecard emphasizes pain, intent, activation, and time-to-value.

Here are pre-MVP metrics that tend to stay actionable across models:

- Problem severity (qual): A simple 1 to 10 pain score is useful when paired with context. High scores with vivid “recent painful moment” stories beat polite interest.

- Current solution dissatisfaction (qual): If people already pay or spend time on workarounds, you have a clearer replacement story.

- Landing page conversion (quant): Visits to email capture, demo request, or waitlist. It is not a finish line, but it tests positioning and channel fit.

- Activation rate (quant): Percent of signups that complete the core action that represents first value.

- Time-to-value (quant): Time from signup to the first meaningful outcome. Long time-to-value is often a UX or onboarding issue, not a marketing issue.

- Problem resolution rate (qual plus quant): After the user completes the workflow, ask if the product solved the problem. Track the percentage saying “yes” among activated users.

If you want one working rule: pre-MVP success is rarely “more signups.” It is “more people reaching value, faster.”

Setting stage gates before you write code

A disciplined approach is to set stage gates: numeric thresholds that decide whether you proceed, revise, or stop. Teams that do this well define the measurement plan during discovery, not after launch. EVNE Developers often frames this as stage-gated loops where each step requires real signals before moving forward.

After a short validation sprint, a gate might be as basic as “X% of targeted testers complete the workflow in under Y minutes and report the job as solved.” The specific numbers vary by domain, yet the idea stays consistent: no signal, no scale.

Post-MVP metrics: retention, expansion, and sustainable acquisition

Once the MVP reliably delivers value to a narrow segment, the product is no longer judged by interest. It is judged by repeat usage and business viability.

This is where teams shift from “Did they try it?” to “Did they come back, and did the business improve?”

Post-MVP metrics that typically matter most:

- Cohort retention: Day 1, Day 7, Day 30 retention curves by signup cohort. Curves tell you more than a single retention number.

- Stickiness ratio: DAU/MAU or WAU/MAU, depending on expected frequency. Use a cadence that matches the job-to-be-done.

- Churn: Logo churn and revenue churn. Track reasons via cancellation flows and support tagging, then validate with behavior.

- Monetization metrics: MRR/ARR, ARPU, conversion to paid, expansion revenue, refunds, delinquency.

- Unit economics: CAC, LTV, CAC payback period, gross margin. These numbers turn “growth” into something you can fund.

A simple way to keep post-MVP metrics decision-ready is to separate outputs from inputs:

- Outputs: retention curve, net revenue retention, MRR growth

- Inputs: activation rate, time-to-value, feature adoption tied to the core workflow

A quick reality check on “growth”

If acquisition rises while retention stays flat, you are buying churn. Sustainable growth nearly always comes from improving what happens after signup: activation, repeat value, and expansion.

A few metrics tend to expose vanity tracking fast:

- Signups: can rise even when the product fails

- Pageviews: rarely predict revenue

- Total downloads: weak without activation and retention context

Looking to Build an MVP without worries about strategy planning?

EVNE Developers is a dedicated software development team with a product mindset.

We’ll be happy to help you turn your idea into life and successfully monetize it.

North Star metrics by business model (pre and post)

A North Star metric works when it captures value delivery in a way that connects to revenue over time. The best North Stars are measurable, hard to game, and directly tied to the customer’s “job.”

For many teams, the North Star evolves. Pre-MVP, it is often a proxy for value delivery. Post-MVP, it becomes a sustained value metric, usually paired with revenue quality.

Below is a model-based view that product teams can use as a starting template.

| Business model | Pre-MVP North Star proxy (validation) | Post-MVP North Star (growth) | Input metrics that move it |

| B2B SaaS | Activated accounts completing the core workflow | Weekly Active Teams (or Net Revenue Retention) | activation rate, time-to-value, retention by role, expansion events |

| Product-led SaaS | Users reaching “Aha” within first session/day | WAU with key action (plus paid conversion rate) | onboarding completion, feature adoption, upgrade trigger rate |

| Marketplace | Successful matches or fulfilled requests in a test region | Transactions (rides, bookings, jobs) | liquidity time, fill rate, cancellation rate, repeat transactors |

| E-commerce | First purchase conversion for a narrow catalog | Repeat purchase rate or purchases per customer per month | add-to-cart rate, checkout completion, delivery time, refund rate |

| Subscription content/media | Trial users hitting a consumption threshold | Retained active subscribers (30-day retention) | content completion, days active/week, reactivation rate |

| Consumer mobile app | Install to meaningful action completion | DAU with key action | D1/D7 retention, session frequency, notification opt-in quality |

One sentence to remember: your North Star should represent value created, not effort spent.

Picking 3 inputs, not 30 metrics

Even strong teams can drown in instrumentation. A practical operating model is one North Star plus three input metrics. EVNE Developers often recommends defining the North Star and the three inputs that move it, then holding that set steady long enough to learn.

A useful way to choose inputs is to map the value path:

- user arrives

- user activates

- user repeats the core workflow

- user pays or expands

Your three inputs should sit on the biggest drop-offs in that path.

Here are examples of inputs that usually drive action:

- Activation rate: Percent reaching first value

- Time-to-value: Minutes or hours to first value

- Retention driver adoption: Percent using the feature that predicts repeat usage

Proving the Concept for FinTech Startup with a Smart Algorithm for Detecting Subscriptions

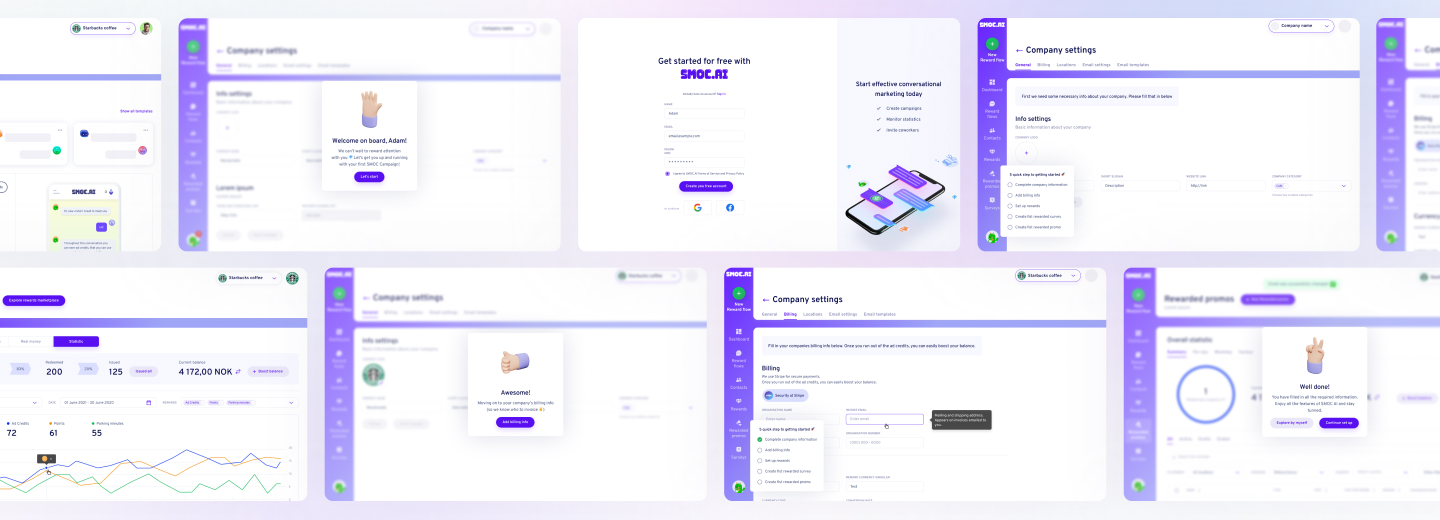

Scaling from Prototype into a User-Friendly and Conversational Marketing Platform

Instrumentation from week one (without slowing delivery)

Metrics fail when events are vague or inconsistent. “Button clicked” is not a product insight. Event design should reflect the customer workflow.

A lean instrumentation setup typically includes product analytics for events, error tracking for quality, and a simple metrics store that makes the numbers visible across product, engineering, and go-to-market. In regulated domains, add consent tracking and data minimization patterns early to avoid rework.

A lightweight build plan that keeps measurement reliable:

- Event taxonomy: Name events after customer outcomes

- Identity rules: Decide how anonymous users become known users

- Source of truth: One dashboard where leadership reviews the same definitions weekly

- Quality checks: Automated tests for key events so releases do not break reporting

Common traps that waste MVP cycles

Most metric mistakes come from good intentions paired with weak definitions.

A few fixes that consistently save time and money:

- Vanity counts: Replace them with funnel rates tied to the value path.

- One metric per team: Keep one shared North Star, then give each function a small set of inputs it can influence.

- No segmentation: Always segment by persona, channel, and cohort. Averages hide failure modes.

- No decision rule: Every tracked metric should have a “what we do if it goes up/down” note.

If you do only one thing, make it this: define the action you will take when the metric misses the threshold.

Need Checking What Your Product Market is Able to Offer?

EVNE Developers is a dedicated software development team with a product mindset.

We’ll be happy to help you turn your idea into life and successfully monetize it.

Conclusion

Teams get value from metrics when they review them with ownership and repeatable structure. A weekly metrics review works well when it is short, consistent, and tied to the roadmap.

A practical agenda is: North Star trend, the three inputs, the biggest funnel drop, one experiment result, and one decision. Keep a single owner per metric who can explain movement and propose the next test.

This rhythm builds a product culture where evidence beats opinion, without waiting for perfect data systems. It also keeps the MVP honest: you are not “shipping features,” you are buying down risk and compounding what works.

Product metrics for MVP (Minimum Viable Product) are key performance indicators that help teams measure the success, usability, and market fit of their initial product version. These metrics typically focus on user engagement, retention, feedback, and core functionality to validate assumptions and guide further development.

Select a North Star metric that aligns with your product’s core value proposition and long-term vision. It should reflect the primary outcome you want users to achieve and be actionable for your team.

Regularly review your product metrics, weekly or bi-weekly during early stages, to quickly identify trends, validate assumptions, and iterate on your MVP based on real user data.

Yes, as your product matures and user needs shift, the most relevant metrics may change. Continuously reassess your metrics to ensure they align with your current goals and growth stage.

About author

Roman Bondarenko is the CEO of EVNE Developers. He is an expert in software development and technological entrepreneurship and has 10+years of experience in digital transformation consulting in Healthcare, FinTech, Supply Chain and Logistics.

Author | CEO EVNE Developers