Regulated software lives under a different standard of proof.

It is not enough for a release to pass functional QA and look stable in staging. Teams also need evidence that high risk behaviors were tested with the right depth, that every critical requirement is mapped to verification and validation results, and that real users accepted the product in a controlled setting. That is why test strategy for regulated software is less about a long list of test cases and more about a system of decisions, records, and release gates.

In healthcare, fintech, insurance, energy, and privacy-heavy SaaS, this structure protects more than product quality. It protects patient safety, transaction integrity, personal data, audit readiness, and the business itself.

WHAT'S IN THE ARTICLE

Why regulated software needs a different test strategy

A consumer app can sometimes recover from weak documentation. A regulated product usually cannot. Auditors, customers, and compliance teams want proof that the product was built against approved requirements, tested against defined acceptance criteria, and released only after unresolved risk was reviewed and accepted by the right people.

That expectation is visible across major standards. FDA 21 CFR 820.30 requires design outputs to be verified against design inputs. ISO 13485 expects documented verification and validation with acceptance criteria. ISO 14971 ties product risk to controls that must be checked for effectiveness. 21 CFR Part 11, HIPAA, GDPR, PCI DSS, and similar rules add another layer by requiring strong evidence around data integrity, access control, audit trails, and record trustworthiness.

A regulated test strategy, then, has three non-negotiable pillars: risk-based depth, traceability, and user acceptance testing that reflects intended use.

| Standard or regulation | Typical domain | What testing must show |

| FDA 21 CFR 820.30 | Medical devices | Design outputs verified against inputs, documented validation evidence |

| ISO 13485 | Medical devices | Planned verification and validation, approved acceptance criteria |

| ISO 14971 | Medical devices | Risks identified, controlled, and tested for effectiveness |

| 21 CFR Part 11 | Electronic records and signatures | Validated system behavior, audit trails, controlled access |

| HIPAA / GDPR | Health data and personal data | Privacy and security controls work as designed, test data is handled safely |

| PCI DSS / SOX | Payments and financial controls | Transaction integrity, segregation of duties, auditable controls |

Risk-based testing decides where rigor goes

Risk-based testing is the backbone of a regulated strategy because not every function deserves the same level of attention. A typo in a help screen is not equivalent to a failure in dosage calculation, funds transfer logic, identity verification, or audit logging. Teams need a repeatable way to decide where testing should be deepest, most formal, and most frequently repeated.

That usually starts during requirements and design, not after development. Risk workshops, hazard analysis, FMEA, fault tree analysis, or a simple severity-by-likelihood matrix can all work if they are applied consistently. The exact format matters less than the result: a ranked view of what can fail, how serious the impact would be, how likely the failure is, and how hard it would be to detect before harm occurs.

The strongest teams treat risk as a live input to QA. When a requirement changes, the risk rating is reviewed. When a severe defect appears, regression scope expands. When production incidents point to a weak control, new tests are added and old assumptions are challenged.

A practical risk score usually considers:

- patient or customer harm

- financial loss exposure

- privacy or security impact

- regulatory reporting impact

- operational downtime

- likelihood of occurrence

- detectability before release

- frequency of change in the component

Once those inputs are visible, test depth becomes easier to justify. High-risk functions may need formal test scripts, negative testing, data integrity checks, boundary coverage, security validation, peer review, and independent sign-off. Lower-risk areas still get tested, but not with the same volume or ceremony.

This is also where many teams gain speed, not lose it. By putting heavy effort where failure matters most, they avoid spreading the same level of manual checking across every feature. The result is better risk coverage with a smaller amount of waste.

Looking to Build an MVP without worries about strategy planning?

EVNE Developers is a dedicated software development team with a product mindset.

We’ll be happy to help you turn your idea into life and successfully monetize it.

Traceability is what makes the strategy auditable

A regulated test strategy fails if the team cannot answer a simple question quickly: show me which tests prove this requirement passed, which risks it controls, and whether any related defects remain open.

Traceability provides that answer. In practice, it means maintaining links between requirements, risk items, design elements, test cases, test runs, defects, changes, and approvals. Some teams manage this in ALM software. Others start with a disciplined matrix. Either way, the rule is the same: every critical artifact should point to the next one in the chain.

Bidirectional traceability matters because audits do not move in one direction only. Sometimes an auditor starts with a requirement and asks for evidence. Sometimes they start with a defect and ask which requirements and releases were affected. Sometimes they begin with a risk control and want proof it was validated after a design change.

A useful trace model usually includes:

- Requirement to test: every critical requirement maps to one or more verification or validation activities

- Risk to control: each identified risk maps to a product control, process control, or both

- Control to evidence: each control maps to executed tests, reviews, or inspections

- Defect to impact: every defect links back to affected requirements, risks, and release decisions

This is where tooling earns its place. Jira with a strong test management add-on can work for some teams. Others need platforms built for stricter design controls and audit logs. The specific tool is less important than a few core capabilities: version control, approval history, role-based access, impact analysis, and reporting that can be exported without manual cleanup.

Weak traceability creates expensive friction. Teams lose time preparing for audits, re-testing the wrong areas after a change, or debating whether a requirement was ever validated. Strong traceability shortens those loops and turns compliance evidence into something teams can use every day, not just during an inspection.

Proving the Concept for FinTech Startup with a Smart Algorithm for Detecting Subscriptions

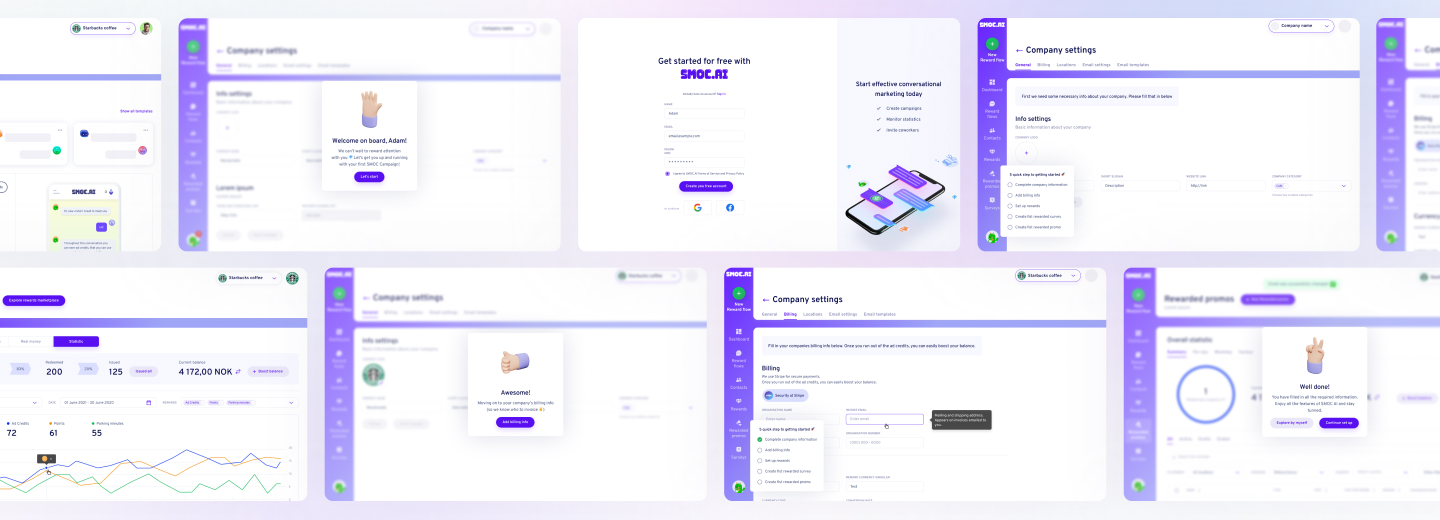

Scaling from Prototype into a User-Friendly and Conversational Marketing Platform

UAT is not a demo, it is controlled validation

User acceptance testing in regulated products is often misunderstood. It is not a friendly walkthrough at the end of the sprint. It is structured evidence that the software supports real user workflows, in realistic conditions, with defined acceptance criteria and documented outcomes.

In many regulated settings, UAT is closely tied to design validation. The goal is to show that the product meets user needs and intended use, not just technical specifications. A system can pass unit, integration, system, and security testing and still fail UAT because the actual workflow is unsafe, confusing, incomplete, or inconsistent with operating procedures.

That is why UAT should involve actual users or trained representatives who know the domain. In healthcare, that may be clinicians or operations staff. In finance, it may be analysts, compliance reviewers, or back-office specialists. In industrial and energy systems, it may be operators and site leads. QA supports execution, but business and operational stakeholders own the acceptance decision.

Good UAT documentation usually includes:

- Approved scripts: step-by-step scenarios mapped to user requirements and intended use

- Production-like setup: representative roles, integrations, permissions, and test data

- Execution evidence: timestamps, screenshots, logs, observed results, and deviations

- Formal sign-off: named approvers, defect disposition, and go or no-go status

UAT should also test compliance-sensitive behavior. That includes audit trails, e-signatures, consent handling, alert routing, access restrictions, record retention, and exception handling. These checks often reveal issues that earlier phases miss because they depend on realistic combinations of users, data, timing, and operational steps.

One more rule matters here: the UAT environment must be controlled. If it does not reflect production conditions closely enough, the value of the evidence drops fast.

Metrics that support release decisions

Regulated teams need metrics, but not vanity metrics. Test case counts and pass percentages look neat in dashboards, yet they rarely answer the release question that matters: is the remaining risk acceptable, and can we prove it?

Better release metrics connect testing to risk and evidence quality. Examples include the percentage of high-risk requirements with passing tests, the percentage of risk controls validated after the latest change, open defects by severity in regulated workflows, traceability completeness, and failed UAT scenarios by business criticality.

A useful rule is simple: if a metric does not help a release manager, QA lead, product owner, or quality representative decide what must happen next, it is probably noise.

Many teams also track defect escape rate in high-risk modules, time to close deviations discovered in UAT, and the share of releases that required audit evidence rework. Those numbers often reveal whether the strategy is working as an operating model, not just as a document.

Need Checking What Your Product Market is Able to Offer?

EVNE Developers is a dedicated software development team with a product mindset.

We’ll be happy to help you turn your idea into life and successfully monetize it.

Conclusion

The strongest test strategies are boring in the best possible way. They make release readiness predictable. Everyone knows which records must exist, which reviews must happen, and which unresolved items block approval.

That package does not need hundreds of pages to be useful. It does need consistency.

A solid release evidence set often includes:

- approved requirements and acceptance criteria

- current risk register or hazard log

- traceability matrix or tool-generated trace report

- executed test results for critical flows

- defect log with severity and disposition

- UAT records and sign-offs

- deviation records and corrective actions

- release recommendation with named approvers

When these pieces are prepared throughout delivery, audits become easier, change impact becomes clearer, and UAT stops being a late surprise. More importantly, teams gain a direct view of whether the product is actually ready for regulated use, not just ready for deployment.

That is the standard a regulated test strategy should meet: clear risk priorities, clean traceability, disciplined UAT, and evidence that stands up under scrutiny.

A test strategy for regulated software is a comprehensive plan that outlines the approach, resources, and processes used to ensure that software meets regulatory requirements. It includes risk-based testing, traceability, and user acceptance testing (UAT) to demonstrate compliance and product quality.

Risk-based testing prioritizes testing efforts based on the potential impact and likelihood of software failures. This approach ensures that critical functions and high-risk areas receive the most attention, helping organizations meet regulatory standards and reduce the risk of non-compliance.

Traceability links requirements, test cases, and defects throughout the software development lifecycle. This ensures that all regulatory requirements are tested and verified, providing clear evidence for audits and inspections.

Typical documentation includes a test strategy, test plans, traceability matrices, test cases, test results, and evidence of defect management. These documents are critical for demonstrating compliance during regulatory audits.

About author

Roman Bondarenko is the CEO of EVNE Developers. He is an expert in software development and technological entrepreneurship and has 10+years of experience in digital transformation consulting in Healthcare, FinTech, Supply Chain and Logistics.

Author | CEO EVNE Developers